1. Depth Image Based Rendering

Depth image-based rendering (DIBR) is the process of synthesizing a virtual view from a real view with associated depth information in stereo vision.

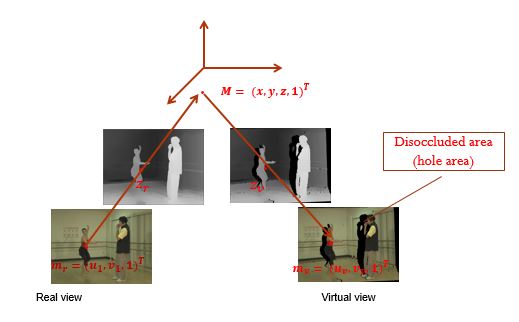

Consider a 3D point \(M=(x, y, z)^T\) in the world coordinate and its projection \(m_l=(u_l,v_l, 1)^T, m_r=(u_r,v_r, 1)^T\) in the left and right view.

From camera calibration, we know the projection equation for each camera, which is shown as follows

\[z_lm_l = K_l(R_lM + t_l) \tag{1}\]

\[z_rm_r = K_r(R_rM + t_r) \tag{2}\]

Where \(K_l\) and \(K_r\) represent the intrinsic parameters, \([R_l|t_l]\) and \([R_r|t_r]\) represent the rotation and translation parameters, \(z_l\) and \(z_r\) are the associated depth information.

Suppose that \(K_l\), \(K_r\), \([R_l|t_l]\), \([R_r|t_r]\), \(z_l\), \(z_r\), and \(m_l\) are prior known, the DIBR process is to calculate the value \(m_r\)

Basically, the process of synthesizing the right view from the left view consists of 2 steps

-

Project the 2D point \(m_l\) from the left view image plane back into the real world coordinate as a 3D point \(M\).

From \((1)\)

\[M=z_lR_l^{-1}K_l^{-1}m_l - R_l^{-1}t_l \tag{3}\]

-

Project the 3D point \(M\) from real world coordinate into the right view image plane as 2D point \(m_r\).

Substitute \((3)\) into \((2)\)

\[m_r = \frac{1}{z_r}(z_lK_rR_rR_l^{-1}K_l^{-1}m_l - K_rR_rR_l^{-1}t_l + K_rt_r)\tag{4}\]

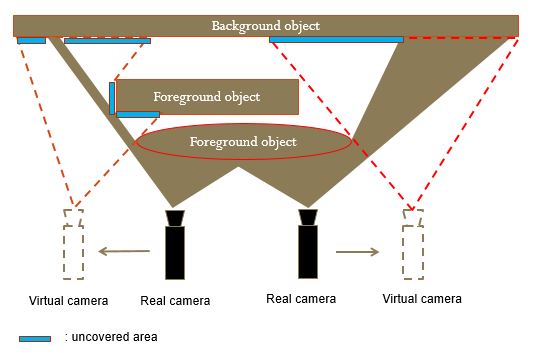

The equation \((4)\) is used to generate the virtual view, however, because of the dis-occlusion problem, some unknown regions can appear.

Generally, information of this uncovered area can be additionally generated by the Object Removal by Exemplar-based Inpainting algorithm or Hole-Filling Algorithm with Spatio-Temporal Background Information for View Synthesis

2. Experiment

By using the MSR 3D Video Ballet dataset, this demo shows how to synthesize the virtual view of 5th camera from the real view of 4th camera and the dis-occlusion problem.

3. References